3 predictions on the future of software after AI

Key takeaways: a multi-model strategy's necessity, emerging AI infra, LLMs as abstractions.

Engineer’s Codex is a publication about practical lessons from real-world software engineering.

The OpenAI saga was a Succession-level drama in the tech world, with Sam Altman going from CEO to Microsoft to CEO in a span of ~72 hours.

I’m not a reporter. There are many great articles recapping the OpenAI drama online.

Instead, I wanted to think critically about what the OpenAI incident may mean for software engineers and the industry instead.

I had three main ideas.

A multi-model strategy will become necessary

A multi-model strategy is when an organization or application uses multiple models, like LLMs, to serve their AI use cases. This provides a safety net in case access to a model goes offline.

This is similar to how companies have a multi-cloud strategy. However, a multi-cloud strategy mostly makes sense once you’re at a large enough scale where a high level of vendor redundancy is needed. For most companies, it doesn’t make sense. It’s also quite hard to manage. I’d argue that a multi-model strategy is more necessary and easier to achieve than a multi-cloud strategy.

Models will become more “switchable”, unlike databases. Databases contain specific data, while models can all be trained and fine-tuned on the same dataset. As the field progresses, I believe the baseline quality of models will converge, while the upper echelons of model quality will be reserved for those with the most proprietary datasets (or the ones that solve synthetic data generation).

This is supported by OpenAI researcher James Betker, who wrote a fascinating piece called The “it” in AI models is the dataset.

He finds that “model behavior is not determined by architecture, hyperparameters, or optimizer choices. It’s determined by your dataset, nothing else.”

Then, when you refer to “Lambda”, “ChatGPT”, “Bard”, or “Claude” then, it’s not the model weights that you are referring to. It’s the dataset.

Eventually, most of the datasets available will be available to everyone. It also means that most models will reach a “good enough” baseline level of performance, where it will be quite feasible to have different models as drop-in replacements.

This also means that the model will probably not be the moat.

Local models are important. You “own” your data, the fine-tuned model, have versioning available, and more. Issues like GPT-4 suddenly downgrading in quality (which has been debated extensively online) are suddenly less of a concern.

The parallel I’d make here for cloud is using a managed Backend-as-a-Service, like Firebase or Supabase, versus deploying a database directly on a box like EC2. The latter takes more maintenance and “DevOps” work, but also provides more control.

Is there a need for AI-specific infrastructure and tooling?

A downstream effect of the rising importance of a multi-model strategy is a larger need for reliable “AI infrastructure” and tooling. What does this mean? Well, I’m not so sure I have full clarity of this myself yet, but I’ve noted some ideas.

The OpenAI scare meant that some people/companies were starting to test local models for the first time, which have seen immense progress in quality and usability since the release of Meta’s LLaMA. Furthermore, cloud providers like Google Cloud already provide instances of LLaMA and Claude. If one model is down, a program depending on an LLM should still work seamlessly, albeit with some possible quality loss.

See: Georgi Gerganov’s “Using llama.cpp with AWS instances”.

There will be more infrastructure needed to compare quality between models for prompts and usages. To compare quality, applications utilizing LLMs will probably need more in-depth telemetry, logging, and backups for prompts, usage, and more.

A lot of these are just applying the basics of normal web applications to LLM-based applications.

I’m sure we’ll eventually see some unique AI infrastructure setups in the same way companies like Google/Facebook/Amazon published landmark papers on distributed systems.

Another good article on this is Latent Space’s “Rise of the AI Engineer.”

On a second note, maybe “AI infrastructure” here can be niched down to be called “cross-model infrastructure.”

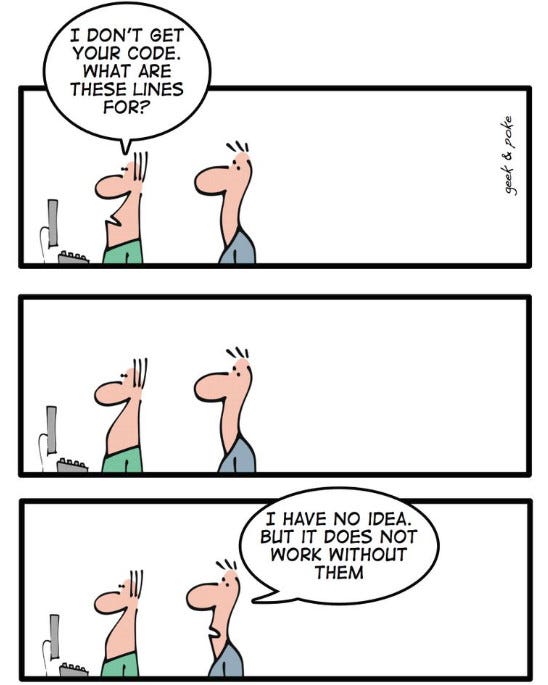

Abstraction layers like LLMs actually create more work, not less

The need for better AI or LLM-specific infrastructure, along with the host of problems that come with non-deterministic of LLMs, means that there’s more software work ahead of us, not less. Abstraction layers like LLMs create more possibilities and thus, more work.

Is this a good thing or a bad thing? I’m not sure.

A great example of this is frontend development. Each new framework abstracts away more and more of the core work happening to the DOM. This makes web development the mess it is today. Having a deep understanding of what's going on under the hood along with how to use the layers of abstractions the right way is difficult and arguably necessary to be one of the best frontend devs (see: Malte Ubl).

Most software work done today is done at the application layer because lower-level architecture is abstracted away.

In the same way, LLMs are an abstraction wrapper around the “real work”: code. While coding and boilerplate is being abstracted away by LLMs, those with a deep understanding of the language/framework they’re using and the code they’re writing will probably be able to manipulate LLMs in the best direction.

I believe the demand for software will only go up. LLMs are here to stay and will spawn a whole new class of work, like AI infrastructure, along with faster development speeds overall (+ new class of hard-to-find, nasty bugs).

What do you think?

Let me know what you think about these predictions/thoughts in the comments. Happy to discuss, debate, and be wrong openly. 🙂

I had this thought and implemented it months ago. However, recently I decided to use the backend I had built on another project but remove the providermanager and hardcode OpenAI as the provider. This was to make the application more lightweight as the client didn’t “need” other providers🙄. I then shipped the product, 2 days later the debacle hit and we had serious outages across many of our apps, however most switched to other providers automatically. The only one that was mostly unavailable…. You guessed it…. The one without the ability to use multiple LLM providers.

Really interesting point about the "multi-model strategy". I think it's super relevant now, that ton of small 'startups' depend 100% on OpenAI.

Regarding the abstractions - here I tend to disagree. I think that shortly being a PM will be enough to get a working product to market. You'll learn to tweak the LLM, and as they get better - they'll fix the nasty bugs themselves (you'll just describe the bug). Currently engineers do have an advantage, as they can 'fill-in' the holes where needed, but I believe it's temporary.

PMs are really prompt engineers for developers - they know how to talk to us in our language, they give us Figma, user stories, and so on. It will be the same, but with LLMs :)